Playing with scores

Sorting is difficult, isn't it?

Currently, there is a bug (https://github.com/distrochooser/distrochooser/issues/110) in the scoring method of the Distrochooser 5 beta.

The next Distrochooser "counts" in three dimensions:

- Positive hits (P)

- Negative hits (N)

- Blocking hits ("no go") (B)

- Neutral hits don't count at all

What is "expected"?

Lets first disuss how distributions should be sorted. An example:

- Distribution A: 5 P 0 N 0 B

- Distribution B: 5 P 1 N 0 B

- Distribution C: 5 P 0 N 1 B

How should they be sorted? Of course, they may be in close reach as they all scored 5 positive hits. Distribution B scored in comparison to C no blocking (B) hit, but a negative (N) hit. As blocking hits are more severe, you would order them A > B > C. If B would have 1 blocking hit, it would have been A > C > B.

Playing around

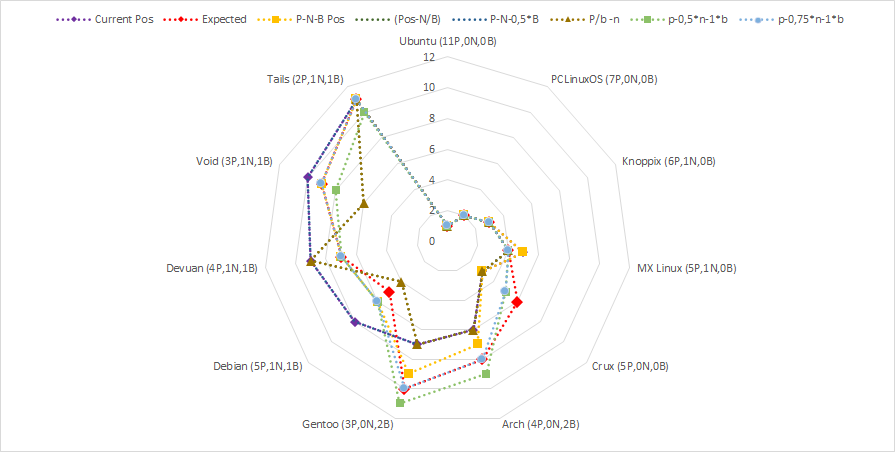

Let's play around with some calculation methods on a sample result with 11 different distributions and a mix of positive, negative, but also blocking hits.

The scoring methods are basically variants from basic operations within the three scoring dimensions - just to see how they score!

In comparison, the different scoring methods show completely obscure results - as expected. Also, you can see how far the current (purple) scoring is from the "expected" method.

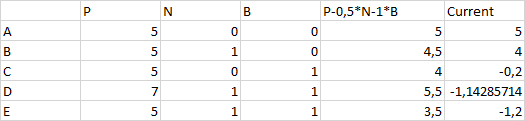

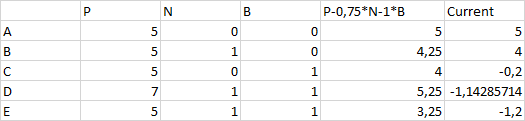

Lets compare all scoring methods with the expected order. In average, the most proposals score 5 out of 11 distributions on the same position as the wanted one - with the only difference, that the scoring P-0,75*N-B scores 9 out of 11 distributions on the same position as the expected result. Thats good!

Lets compare the current scoring with that scoring.

The resulting order would have been D > A > B > C > E. In comparison: the current scoring would sort them A > B > C > D > E.

Lets compare this version with a slightly adapted version where negative hits have a slightly higher weight: The resulting order will be A > D > B > C > E.

This will cause the order D > A > B > C > E, same as with the previous one.

Conclusion

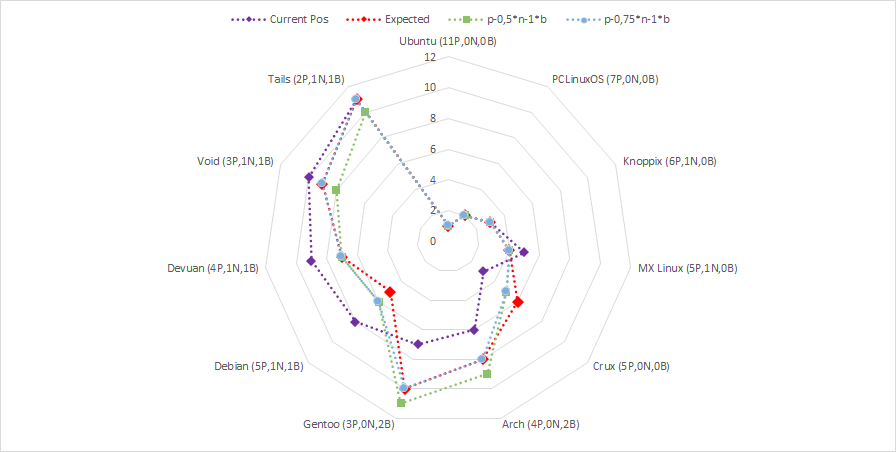

Take another look on this two scorings in comparison with the current and expected ones:

Both scoring methods are quite close to the expected one, even closer than the current scoring. Both with slight differences, due to the different weightings. I will play around with some weightings for N and B and will apply this within the next distrochooser updates.

Photo by Nick Hillier on Unsplash